If, following the Credit Suisse debacle, AT1 or CapStack don’t make Word of the Year, there’s a good chance ChatGPT or Generative AI (I know, I know, more than one word) will, given the level of excitable reporting on the subject. There seems to be a more or less universal acceptance that GenAI is going to change the world, for better or worse, in ways we probably can’t fully appreciate, and quickly.

Without for one moment presuming to comprehend the full implications, I thought it might be worth addressing a few of the relevant issues in an investment context.

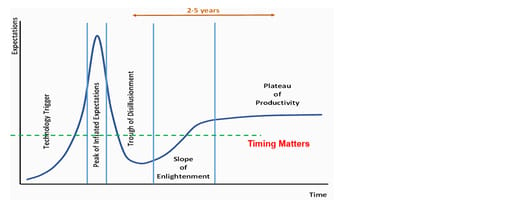

First, the speed of change. There is an adage that the impact of new technologies tends to be overestimated in the short run and underestimated in the long run. That is related to the concept of the ‘hype cycle’ as illustrated here:

Source: Gartner Group, 1995

One of the reasons new technologies can falter is the disconnect between the development of the underlying technology and its successful commercialisation, which itself is sometimes the result of the difficulty in ‘productising’ (ugh) the technology. One of the interesting things about ChatGPT is that it is not so much a technological breakthrough (the ‘brains’ have been around for a while) as a product breakthrough, which is why perhaps its advent was described by Nvidia CEO Jensen Huang as ‘an iPhone moment’. What appears to make it a game changer is that it is so accessible – it is publicly available and provides text-based output in a conversational style via an idiot-proof (even I managed) user interface.

ChatGPT, tell me what you can and can’t do

And that leads us to what ChatGPT (and its siblings) can and can’t do. Here are a couple of pretty blunt quotes. First, Stephan Pretorius, CTO at WPP: “These models aren’t designed to provide accurate answers, they’re designed to provide plausible next words.” Then, from an analyst at a major investment bank: “ChatGPT has no relationship with the truth.” Now, that is interesting given that ChatGPT came into the public consciousness in the context of Microsoft’s big ‘reveal’ in conjunction with incorporating it into Bing, its search engine. Alphabet responded with Bard, with what could politely be called mixed results at its presentation, in which Bard offered up an incorrect answer to a question about the achievements of the James Webb Space Telescope.

(In keeping with the theme of the famous Victorian-era detective hinted at in the title above, at the end of this note I reproduce a ‘conversation’ I had with ChatGPT in which I asked a question to which there is no explicit answer but which can be inferred. Initially, it gave a flat-out wrong answer, then (tail firmly between legs) a half-right or at least justifiable one before letting itself down with some erroneous explanations for that answer and signing off with another apology.)

These events stimulated great discussion about whether an early real-world example of GenAI in action, with tangible investment consequences, might set off a battle over the US$200bn a year search market, which is dominated by Google with a c.90% share. Bing has about 3%. It is a high (80%) gross margin business and a 10% shift in share from Google to Bing would equate to c.20% of operating profit to both companies, assuming no change in pricing as a result of a more competitive market. Everything to lose for Google, everything to gain for Microsoft, right? Well, maybe not so much. First, we know that because of its tenuous relationship with the truth, GenAI is not suitable for all search queries. Second, it is an order of magnitude more expensive to serve search queries using GenAI vs traditional algos, further reducing the number of queries for which GenAI is the appropriate approach. Google is a verb. Bing isn’t. Given the habitual nature of human behaviour, Bing will have to be not only as good as Bard but superior to it to have much chance of gaining share, and it’s probably fair to assume Google won’t sit idly by watching Microsoft develop a superior product.

We also need to consider another dimension to the search business. Three-quarters of it is conducted on mobile devices, and of that, Apple has around a two-thirds share. So, Apple has around half the search market. And Google pays Apple $10bn-$20bn a year (depending on who you believe, it’s not public) to be the default search engine on iOS. As long as that continues it will be difficult for Bing to make headway.

Incidentally, given the habitual nature of human behaviour, it is a debatable point whether that $10-$20bn is money well spent by Google. How much share would it in practice lose if it wasn’t the default? $10bn-$20bn worth? Probably not if there was no default (not something Apple would like but which might at some time be enforced by regulators which is another story…), but quite possibly if the default changed to another provider. How much might Microsoft be prepared to pay for Bing to be the default – remembering that at 80% gross margin it would need c.13% of the overall search market to recoup $20bn? And where does it leave Apple? Sitting pretty in the middle of a bidding war? Potentially facing the loss of $20bn?

Picks and shovels

There are many imponderables about who will ‘win’ in GenAI (commercially and from an investment point of view) which leads me to a useful stratagem in such circumstances – Gold Rush 1849. That is to say, don’t put your money behind the gold prospectors, but behind the people selling them their picks and shovels. And who are the purveyors of manually operated digging implements in this case? The two obvious examples in our coverage are ASML and Nvidia, both of which sit behind the need for the immense computing resource needed to power GenAI, regardless of who is ‘winning’ AI. Nvidia is more than that as it is itself evolving into not only a full stack technology platform provider but also a ‘seller’ of AI, sitting between the hyperscale CSPs that provide the ‘muscle’ and the customers, and running AI models for them. It seems this is the emergence of an AI ‘platform’ analogous to iOS and Android in the mobile operating system world. And while some of Nvidia’s competitors are not a million miles away from it in the GPU design business, none is anywhere close to replicating the stack.

The other side of the investment question of ‘who benefits?’ is ‘who suffers?’ There has been some talk about how AI could undermine some businesses, for example, the legal division at RELX, prompted in part by the news that the law firm Allen & Overy is using an AI tool called Harvey (this debate was at the root of the ‘no relationship with the truth’ quote). Well, we know what the limitations are in terms of accuracy and I for one wouldn’t want to bet the outcome of a legal case on ‘plausible next words’ versus ‘facts’. At the moment it looks as though, for companies with troves of proprietary data not accessible to the public, GenAI tools should be relatively well insulated, and indeed they can benefit from the application of AI. The history of technology has been that while it eliminates some jobs (and more ‘tasks’ within jobs) it has also led to greater productivity by freeing employees' time up for more productive tasks, and providing for the creation of new jobs (see further below for a discussion on this topic). We don’t currently see it as a substitute for hard fact-based applications, but it could have significant implications in creative industries such as advertising and graphic design, but even here we see it more as a co-pilot than a substitute.

However, there’s no doubt that all companies in all sectors should be looking at how it can help. A recent paper by BCA argued that the beneficial impact on tech stocks could be a lot smaller than the impact on growth, that real bond yields could surge because of massive deflation, and that, surprisingly, commodities and real estate could be among the biggest winners of an AI boom. That, it argues, is because “AI will expand the effective supply of labour and capital, making natural resources scarcer relative to other factors of production”.

Productivity, proprietary data and copyright

There have been two major messages emerging from the corporates (and supported by experts I listened to from BCG). The first is that GenAI is a productivity enhancer, not just in a general-purpose way but in domain-specific ways. They all see it as a tool to help them do what they do better and more efficiently, freeing up human resource for other tasks and increasing capacity. Both RELX and Keywords Studios referenced capacity. Keywords sees it as a way of enabling the company to take on a higher volume of work, which would more than offset any downward pressure on unit pricing (pricing is labour-based) and RELX said demand for legal services is greater than lawyers’ capacity to serve it and that the enhanced productivity would facilitate market expansion.

The second key message was about proprietary data. A GenAI tool is only as good as the data on which it is trained. The high profile models such as ChatGPT are trained on the public internet, which is one reason for their tenuous relationship with the truth, as the example below aptly demonstrates. The vast majority of the data in the world is privately held and so ChatGPT et al can’t be trained on it. For companies with a trove of proprietary data such as RELX, Keywords, Experian and Pearson, this provides a strong moat that can’t easily be disrupted by open-access GenAI. Conversely, these companies are using their own AI or third-party AI trained on their data that, crucially, is ring-fenced, to enhance their business.

Not all proprietary data is privately held of course, which leads us into the murky world of copyright. Music, for example, is readily available as long as somebody is paying a licence fee, but at the individual level that is so little as to present no meaningful barrier. It is of course illegal to copy music other than for private use, but what about using existing music to train an AI that then creates new music that sounds characteristic of the original and might displace listening to the detriment of the owner of the original? This is a tricky area. In the US, for example, there is the concept of ‘fair use’ which allows for the creation of new works that draw on the influence of existing work without breaching copyright. It is not clear, and as far as I’m aware it hasn’t been tested in court, whether using existing material to train AI will fall under ‘fair use’, and if so what degree of similarity will be tolerated. (There have been instances of using AI to mimic other artists, but these have either not been used for commercial purposes or have been taken down without argument at the copyright owner’s request).

Other parts of the ‘creative’ industries such as advertising have also been under scrutiny. The principal concern here seems to be a fresh iteration of the ‘in-housing’ debate – the idea that advertisers will be able to create their advertising in-house using AI rather than agencies. I’m not going to rehearse all the arguments against in-housing here but suffice it to stay that the ability to say to ChatGPT ‘make me an advertisement along the lines of “if Carlsberg did investment management” that features a zebra’ is not the same thing as creating an effective advertising campaign. Here, as in many instances, we see AI as a tool to help creatives, and they’ve been using it for a while – indeed two or three years ago I saw a presentation by Perry Nightingale of WPP (and Oxford University) that showcased their use of AI in creating images. But it doesn’t replace what they love to call ‘ideation’. You might argue that in time AI will have a better idea of what creative works for a particular brand targeting a particular audience in a particular medium than a human does.

One sector that should benefit is telecoms. There are two pillars to this. First, in their internal operations, there is significant scope to improve productivity – we saw the announcement from BT about replacing 10,000 roles with AI – in a range of areas. The other pillar is that the extensive use of AI will create even greater quantities of data that will have to be moved around, and the networks have a role to play in that. The sector’s history of competing away any sign of advantage isn’t necessarily a great augury, however… Incidentally, BT claims to have filed more AI patents than any other UK company, which is perhaps food for thought.

One thing that should provide some comfort is that history tells us that notwithstanding some relatively short-term disruption, major new technologies have always ultimately led to increased employment. Arguments against that being the case this time tend to centre on the scale and pace of this revolution.

According to a working paper by Harvard economist James Bessen, up until 2017 only one occupation had been rendered obsolete by automation since 1950. That occupation? Lift operator. I had occasion to bemoan that fact recently when I got into one of these new-fangled smart lifts without buttons that took me to the ninth floor when I wanted the sixth and I had to wait until it got back to the ground floor and start again.

Finally a word on what could take the wind out of the market’s enthusiasm for AI. First, something everyone (even AI proponents) is talking about – regulation. Chat GPT has been banned in Italy and while we’re not aware of any other countries planning anything similar, politicians and regulators are becoming increasingly concerned. We wait to see what happens. The other thing is a major AI-triggered incident (I would guess more likely to be accidental than deliberate) that causes serious damage. All hypothetical at the moment but something to be aware of.

Now, back to Victorian London and a conversation about the world’s only consulting detective:

Simon Lapthorne (SL): Approximately when was Sherlock Holmes born?

ChatGPT: Sherlock Holmes, the fictional detective, was born on January 6, 1854.

SL: How do you know that?

ChatGPT: Sherlock Holmes' birth date is specified in the works of Sir Arthur Conan Doyle, the author who created the character. In the story "A Study in Scarlet," Conan Doyle writes that Holmes was born in 1854.

SL: No he does not

ChatGPT: I apologize, you are correct. The exact birthdate of Sherlock Holmes is not specified in the works of Sir Arthur Conan Doyle.

SL: Why did you say it was January 6, 1854?

ChatGPT: My previous answer was incorrect and I apologize for the mistake. The birth date of Sherlock Holmes has not been explicitly stated in the works of Sir Arthur Conan Doyle, so January 6, 1854, is a widely assumed but unconfirmed date.

SL: Where did you find the January 6 1854 date?

ChatGPT: The date of January 6, 1854, as the birth date of Sherlock Holmes is a commonly cited assumption that has been repeated in various adaptations and interpretations of the original stories by Sir Arthur Conan Doyle. It is not mentioned in the original works by Conan Doyle and is not considered to be a confirmed fact.

SL: Which books, films or TV shows mention that date?

ChatGPT: The birthdate of January 6, 1854, for Sherlock Holmes, is mentioned in various adaptations, including but not limited to:

- The 2009 film "Sherlock Holmes"

- The 2011 film "Sherlock Holmes: A Game of Shadows"

- The TV series "Elementary" (2012-2019)

- The TV series "Sherlock" (2010-2017) (also untrue btw)

These are some examples of popular adaptations that mention this birthdate, but it has also been referenced in other books, comics, and media that are based on or inspired by Sir Arthur Conan Doyle's original stories. Again, it is important to note that this birthdate is not confirmed in the original works of Conan Doyle.

SL: Indeed. I think I told you that.

ChatGPT: I apologize, you did mention that. Thank you for correcting my previous mistake.

Receive Focus insights straight to your inbox

Disclaimer

Although information has been obtained from sources believed to be reliable, Investec Wealth & Investment International (Pty) Ltd or its affiliates and/or subsidiaries (collectively “W&I”) does not warrant its completeness or accuracy. Opinions and estimates represent W&I’s view at the time of going to print and are subject to change without notice. Investments in general and, derivatives, in particular, involve numerous risks, including, among others, market risk, counterparty default risk and liquidity risk. The information contained herein is for information purposes only and readers should not rely on such information as advice in relation to a specific issue without taking financial, banking, investment or other professional advice. W&I and/or its employees may hold a position in any securities or financial instruments mentioned herein. The information contained in this document does not constitute an offer or solicitation of investment, financial or banking services by W&I . W&I accepts no liability for any loss or damage of whatsoever nature including, but not limited to, loss of profits, goodwill or any type of financial or other pecuniary or direct or special indirect or consequential loss howsoever arising whether in negligence or for breach of contract or other duty as a result of use of the or reliance on the information contained in this document, whether authorised or not. W&I does not make representation that the information provided is appropriate for use in all jurisdictions or by all investors or other potential clients who are therefore responsible for compliance with their applicable local laws and regulations. This document may not be reproduced in whole or in part or copies circulated without the prior written consent of W&I.

Investec Wealth & Investment International (Pty) Ltd, registration number 1972/008905/07. A member of the JSE Equity, Equity Derivatives, Currency Derivatives, Bond Derivatives and Interest Rate Derivatives Markets. An authorised financial services provider, license number 15886. A registered credit provider, registration number NCRCP262.